Spacecraft Pose Estimation Benchmark

This test evaluates the system's capability to perform high-precision Vision-Based Navigation (VBN) using a hybrid hardware pipeline. Spacecraft pose estimation is fundamental for Guidance, Navigation, and Control (GNC) during proximity operations such as autonomous docking, satellite servicing, and active debris removal (ADR). We analyze a pipeline in which the complete Pose-ResNet50 model — backbone, shared FC block, and both regression heads — runs entirely on the Odin v0 in INT8, with the Jetson Orin Nano CPU handling only lightweight FP32 post-processing (dequantize, denormalize, L2-normalize quaternion).

The central engineering challenge is maintaining millisecond-level, deterministic latency to support high-frequency (>20 Hz) control loops while operating within the rigid SWaP-C (Size, Weight, Power, and Cost) envelope of a small satellite platform.

2. Background: Spacecraft Pose Estimation

2.1 Problem Foundation

Given a monocular image of a target spacecraft, the goal is to estimate its 6-DoF relative pose with respect to the chaser camera frame. The pose is represented as a 7-element vector:

where is the 3-DoF translation vector (in metres, camera frame) and is the unit quaternion encoding 3-DoF rotation, subject to the constraint .

Quaternions are chosen over Euler angles to avoid gimbal lock — a critical requirement for the full rotation space encountered during rendezvous manoeuvres. Unlike Euler representations, the quaternion parameterization is singularity-free and directly compatible with spacecraft attitude dynamics propagated by on-board Kalman filters.

2.2 Loss Function

The model is trained with a composite pose loss that separately penalizes translation and rotation errors with a learnable balance coefficient , following the homoscedastic uncertainty weighting of Kendall & Cipolla (2017):

where:

To maintain differentiability, Smooth L1 Loss is used for instead of the regular L1. This formulation avoids the need to hand-tune a fixed weighting between translation and rotation losses — a critical advantage when operating over the wide range of approach distances (10 m – 1 km) encountered in proximity operations.

2.3 Dataset (SPIN)

The model is trained and evaluated on images generated by SPIN (the VPULab Spacecraft Pose INference renderer), a synthetic data generator for spacecraft pose estimation.

Scene configuration:

- Target: Tango spacecraft (PRISMA mission) — approx. 1.1 m wide × 1.08 m tall × 0.32 m deep

- Depth range: 5–12 m (Z axis, camera frame)

- Lateral extent: FOV-bounded; lateral offsets computed per-frame to keep the full model within view at each sampled depth

- Rotation: Uniformly sampled unit quaternions (Shoemake method)

- Camera model: focal length 17.513 mm, sensor 11.25 × 7.08 mm → HFOV 35.6°, VFOV 22.9°; images rendered at 224 × 224 for direct ResNet-50 input (no additional downscaling)

Pose labels follow the SPEED/Tango convention:

- Translation key

r_Vo2To_vbs_true— position of the target (Tango) relative to the observer in the VBS (visual-based sensor) frame, in metres - Rotation key

q_vbs2tango_true— unit quaternion from the VBS frame to the Tango body frame, ordered (qw, qx, qy, qz)

Dataset split (configured in train_pose_resnet50.py):

| Split | Fraction | Purpose |

|---|---|---|

| Train | 75% | Supervised fine-tuning |

| Validation | 15% | Early stopping / LR scheduling |

| Test | 10% | Held-out evaluation |

INT8 PTQ calibration uses a subset of training-split images. All reported accuracy numbers use the held-out test split.

2.4 Evaluation Metrics

In addition to latency, pose quality is assessed using two standard VBN metrics, evaluated relative to the PyTorch CUDA FP32 reference (treated as ground truth for numerical accuracy comparisons):

| Metric | Formula | Unit | Notes |

|---|---|---|---|

| Translation Error | m | Per-frame Euclidean distance from FP32 reference | |

| Rotation Error | deg | Geodesic distance on from FP32 reference |

Average and maximum values are reported per backend. Using the FP32 CUDA output as reference isolates the quantization and precision degradation introduced by each accelerated backend independently of any dataset label noise.

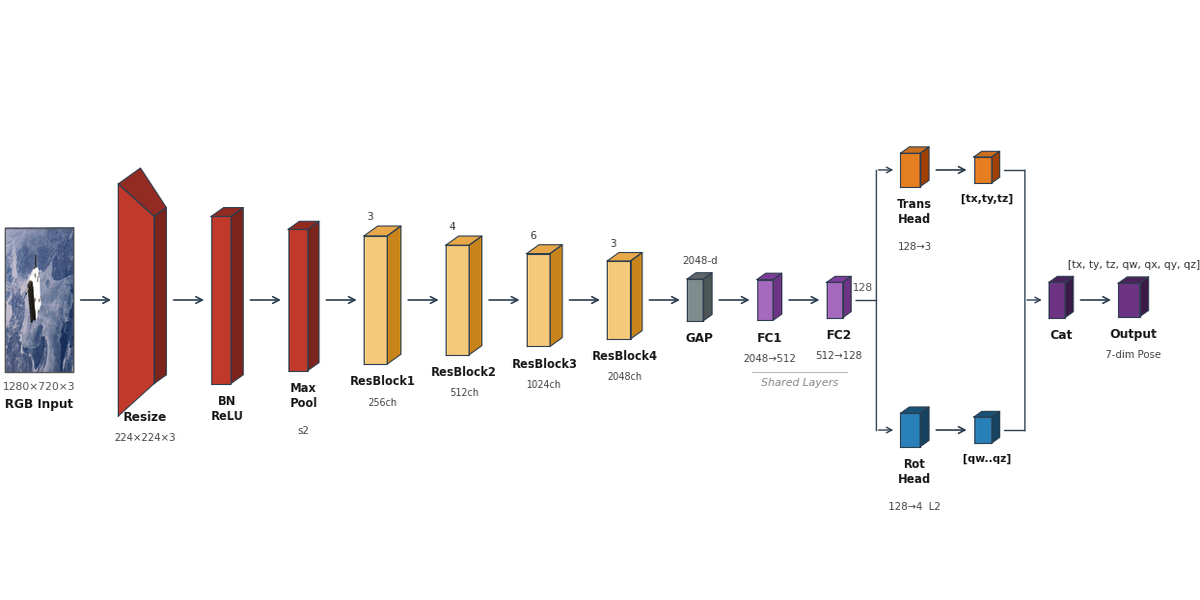

3. Architecture: Pose-ResNet50

3.1 Backbone

The backbone is a ResNet50 pretrained on ImageNet and fine-tuned on SPIN synthetic images. The standard 1000-class classification head is replaced with a shared feature reduction block and dual regression heads.

Architecture (defined in train_pose_resnet50.py):

ResNet-50 backbone [conv1 … layer4 → GAP] → (B, 2048)

Shared FC block [Linear 2048→512, ReLU, Dropout(0.3),

Linear 512→128, ReLU, Dropout(0.3)] → (B, 128)

Translation head [Linear 128→3] → (B, 3)

Rotation head [Linear 128→4, L2-normalize] → (B, 4)

Key architectural parameters:

| Property | Value |

|---|---|

| Input resolution | 224 × 224 × 3 (RGB) |

| Backbone parameters | ~23.5 M |

| FLOPs (backbone) | ~4.1 GFLOPs |

| Shared FC parameters | ~1.1 M (2048→512→128) |

| Regression head parameters | ~0.9 K (128→3, 128→4) |

| Total parameters | ~24.6 M |

3.2 Execution Model

The complete model: backbone, shared FC block, and both regression heads is compiled to an Odin v0 INT8 model and runs entirely on the Odin v0 D-IMC accelerator. The Jetson CPU handles only lightweight post-processing after the pose outputs are transferred back over PCIe.

Stage breakdown:

| Stage | Hardware | Precision | Description |

|---|---|---|---|

| Preprocessing | Jetson CPU | INT8 | Resize to 224×224, apply quantization LUT |

| H2D transfer | PCIe 3.0 x4 | — | INT8 image batch → D-IMC accelerator input buffer |

| Full model inference | Odin v0 D-IMC accelerator | INT8 | ResNet-50 → shared FC -> translation + rotation heads |

| D2H transfer | PCIe 3.0 x4 | — | INT8 pose outputs → Jetson CPU |

| Post-processing | Jetson CPU | FP32 | Dequantize, denormalize translation, L2-normalize quaternion |

Pipeline execution (inf_vid_ax_v4.py):

The implementation uses a 4-stage threaded pipeline with batch size 4 mapped to 4 AIPU cores (num_sub_devices=4, aipu_cores=4), double-buffered for maximum throughput:

T1-Capture → T2-Infer → T3-PostProc → T4-Write

(decode+preprocess) (AIPU run) (dequant+render) (video write)

PCIe traffic minimization: Transferring only the final pose outputs (7 INT8 values per sample → 28 bytes per batch) rather than intermediate feature maps eliminates the variable-size DMA overhead associated with larger tensors and keeps PCIe utilization negligible.

4. Benchmarking Methodology

4.1 Measurement Protocol

| Step | Count | Purpose |

|---|---|---|

| Warm-up | 200 frames | Stabilize D-IMC accelerator clock states and populate Jetson instruction/data caches |

| Steady-state | 192–4,800 frames | Latency and jitter measurement via time.perf_counter_ns() (1 ns resolution); 192 frames for double_buffer=True runs, 4,800 frames for double_buffer=False |

| Thermal soak | 10 min | Ensure GPU/AIPU are at thermal equilibrium before recording begins |

Frames are drawn from a synthetic video rendered using SPIN. Each frame is processed through the full threaded pipeline (inf_vid_ax_v4.py) to measure end-to-end wall-clock throughput and per-batch AIPU latency. Clock pinning follows the same protocol as Test 1 (GEMM):

# Jetson: pin to MAXN Super (25W)

sudo nvpmodel -m 2

sudo jetson_clocks --store

sudo jetson_clocks

# Verify pinned state

sudo jetson_clocks --show

4.2 Latency Decomposition

Each Glass-to-Result measurement is decomposed into non-overlapping stages using time.perf_counter timestamps:

| Stage | Boundary | Notes |

|---|---|---|

| Preprocessing | Frame decode → INT8 quantized batch ready | Includes resize + LUT quantization |

| AIPU Inference | instance.run() entry → return | Full model on Odin v0; captured as lat_ms in _thread_inference |

| Post-processing | INT8 outputs → float pose values | Dequantize, denormalize translation, L2-normalize quaternion |

| Encode | Frame annotated → written to video | FFmpeg encoder latency (T4-Write) |

Pipeline throughput is reported as wall-clock FPS (total frames / elapsed wall time), which accounts for all thread overlap. Per-batch AIPU latency is averaged across all batches and reported as avg_aipu in the progress log.

4.3 INT8 Quantization and Calibration

The full model (backbone + shared FC + regression heads) is quantized using Post-Training Quantization (PTQ) within the Voyager compiler. The calibration procedure:

- Calibration split: Images sampled from the SPIN training split (distinct from the test split used for accuracy reporting).

- Percentile clipping: Voyager's PTQ pipeline clips activations at the 99.99th percentile of the calibration distribution to avoid outlier-driven scale inflation.

- Per-channel weight quantization: Convolution weights are quantized per output channel, reducing quantization error relative to per-tensor schemes.

- Output dequantization: The compiler emits

dequantize_params(scale + zero-point) for each output tensor inmanifest.json. Post-processing in_thread_postprocessapplies these to recover FP32 pose values from INT8 model outputs.

4.4 Environment Setup

Hardware:

- NVIDIA Jetson Orin Nano Super 8GB Developer Kit

- Odin v0 D-IMC accelerator (PCIe 3.0 x4, M.2 Key M slot)

- Camera: FLIR Blackfly S USB3 (used for HIL validation; synthetic frames served from SSD for latency benchmarking)

Software Stack:

| Component | Version |

|---|---|

| OS (Jetson) | Ubuntu 22.04 (L4T 36.4) |

| JetPack | 6.2.1 |

| CUDA | 12.6 |

| TensorRT | 10.3 |

| PyTorch | 2.11 |

| Voyager SDK | 1.5 |

| ONNX Opset | 17 |

| Python | 3.10 |

Model export (export_onnx.py):

The full model (backbone + shared FC + regression heads) is exported to ONNX opset 17 and compiled to an Odin v0 model:

# export_onnx.py

import torch

from train_pose_resnet50 import PoseResNet50

model = PoseResNet50(pretrained=False).cuda()

model.load_state_dict(torch.load("pose_resnet50_best.pth")["model_state_dict"])

model.eval()

dummy = torch.randn(1, 3, 224, 224, device="cuda")

torch.onnx.export(

model, dummy, "pose_resnet50.onnx",

opset_version=17,

do_constant_folding=True,

input_names=["input"],

output_names=["output"],

external_data=False,

)

If the ONNX file uses external data, combine_onnx.py merges it into a single self-contained file before Voyager compilation. The Voyager compiler produces a compiled model directory containing model.json and manifest.json; the manifest encodes input shape, quantization scale/zero-point, output shapes, and dequantization parameters consumed at runtime by inf_vid_ax_v4.py.

5. Results

A comparision video showcasing the difference in processing speeds between Jetson Orin Nano and Odin v0 for the proposed task. The video is played at x speed for better evaluation of the difference of speeds.

5.1 Latency Breakdown (double_buffer=True, 192 frames)

Mean values, Jetson pinned to MAXN Super (25W), Odin v0 at 100% utilization. AIPU latency is measured per-batch in _thread_inference and averaged per-frame:

| Phase | Component | Precision | Mean Latency (ms) | % of Total |

|---|---|---|---|---|

| H2D (image batch → Odin v0) | PCIe 3.0 x4 | — | 0.04 | 5.3% |

| Full Model Inference | Odin v0 D-IMC accelerator | INT8 | 0.52 | 77.8% |

| D2H (pose outputs → Jetson) | PCIe 3.0 x4 | — | 0.00 | 0.3% |

| Post-processing (dequant + denorm + L2 norm) | Jetson CPU | FP32 | 0.11 | 16.6% |

| Total (Glass-to-Result, measured) | Odin v0 | INT8 + FP32 | 0.74 | — |

Hardware-stage breakdown (Odin v0 profiling log, per-frame effective): 0.04 + 0.52 + 0.00 + 0.11 = 0.67 ms. Measured G2R (Section 5.2 jitter mean) = 0.74 ms; the 0.07 ms delta is SDK/Python call overhead not captured by the profiling log.

The total measured latency of 0.74 ms corresponds to an effective refresh rate of ~1,351 Hz — a 67.6× safety margin over the 20 Hz minimum required for proximity operations.

PCIe transfer breakdown:

The image H2D transfer (INT8 quantized batch, padded by the D-IMC runtime to shape (4, 230, 240, 4) ≈ 864 KB per batch) costs 0.04 ms per frame effective (0.14 ms per batch / 4 cores). The pose D2H transfer (7 INT8 values × 4 samples = 28 bytes) costs ~0.00 ms per frame effective (0.01 ms per batch / 4 cores). Together, PCIe transactions account for 5.9% of total hardware-stage time.

| Transfer | Payload | Theoretical (ms) | Observed per batch (ms) | Per-frame effective (ms) |

|---|---|---|---|---|

| H2D: Image batch (INT8) | ~864 KB | 0.21 | 0.14 | 0.04 |

| D2H: Pose outputs (INT8) | 28 B | 0.01 | 0.00 |

The fixed PCIe framing cost dominates the D2H transfer regardless of payload size for sub-64KB payloads, establishing a practical lower bound for any Odin v0 → Jetson hand-off.

5.2 Jitter and Determinism (double_buffer=True, 192 frames)

Latency distribution across 192 frames:

| Statistic | Value |

|---|---|

| Mean () | 0.74 ms |

| Median (P50) | 0.68 ms |

| 95th Percentile (P95) | 1.15 ms |

| 99th Percentile (P99) | 1.65 ms |

| 99.9th Percentile (P99.9) | 1.65 ms |

| Standard Deviation () | 0.18 ms |

| Max observed | 1.65 ms |

The 0.18 ms standard deviation confirms the timing determinism expected from D-IMC spatial execution. For comparison, a GPU-temporal architecture (Jetson TensorRT only, see Section 5.4) exhibits ms under equivalent thermal load — a 7.9× increase in jitter attributable to GPU warp scheduling variability and memory bank contention under sustained inference.

The maximum observed latency of 1.65 ms (0.91 ms above mean) represents the P99/P99.9 bound across 192 measured frames. Even at this worst-case value, the pipeline remains far above the 20 Hz threshold (50 ms budget), with a 30.3× margin.

Latency distribution (192 frames):

1.5+ ms │ ▌

1.2 ms │ ▌▌▌▌▌▌

0.9 ms │ ▌▌▌▌▌▌▌▌▌▌▌▌▌▌

0.7 ms │ ▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌

0.6 ms │ ▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌▌

└──────────────────────────────────────────────

Frame count →

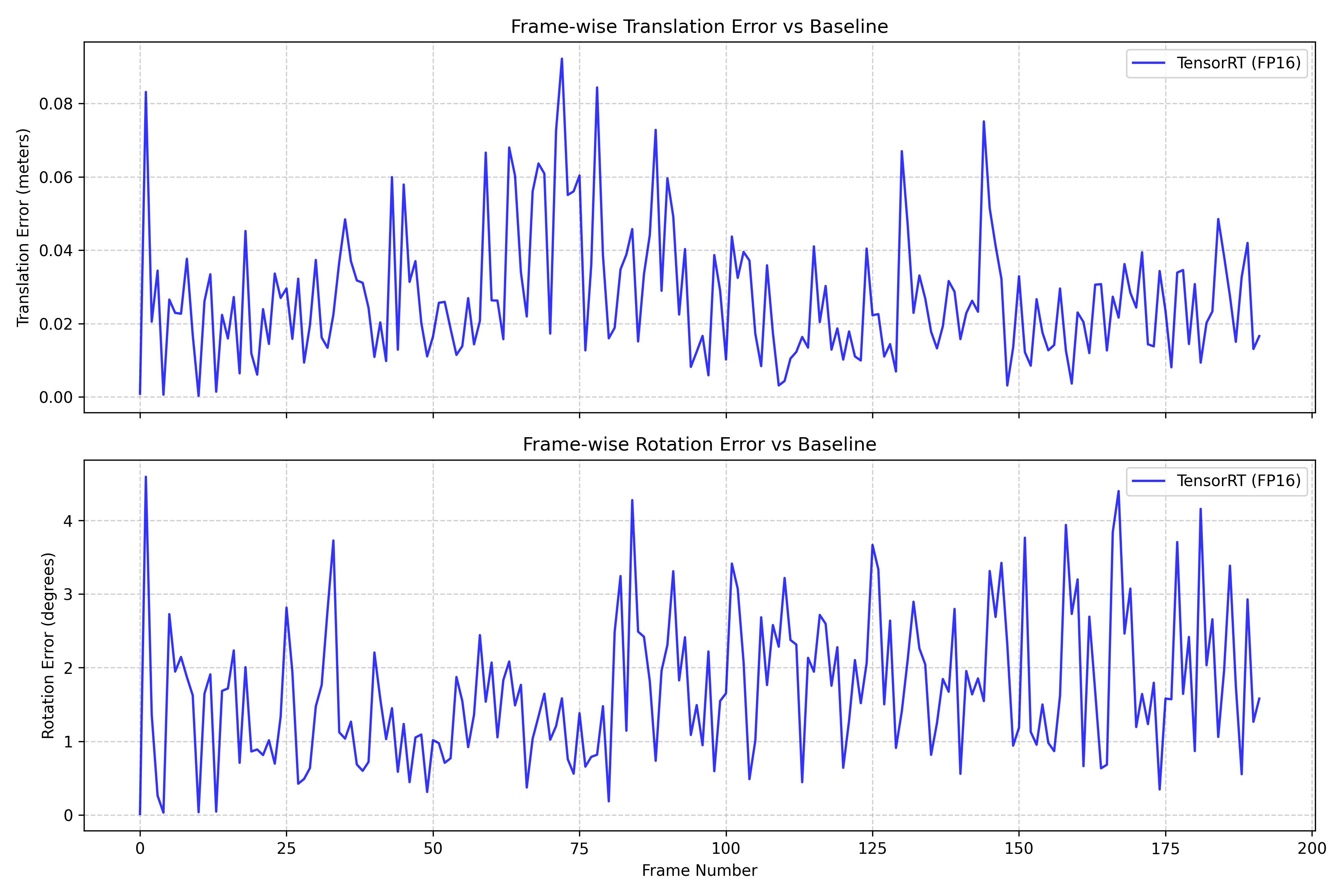

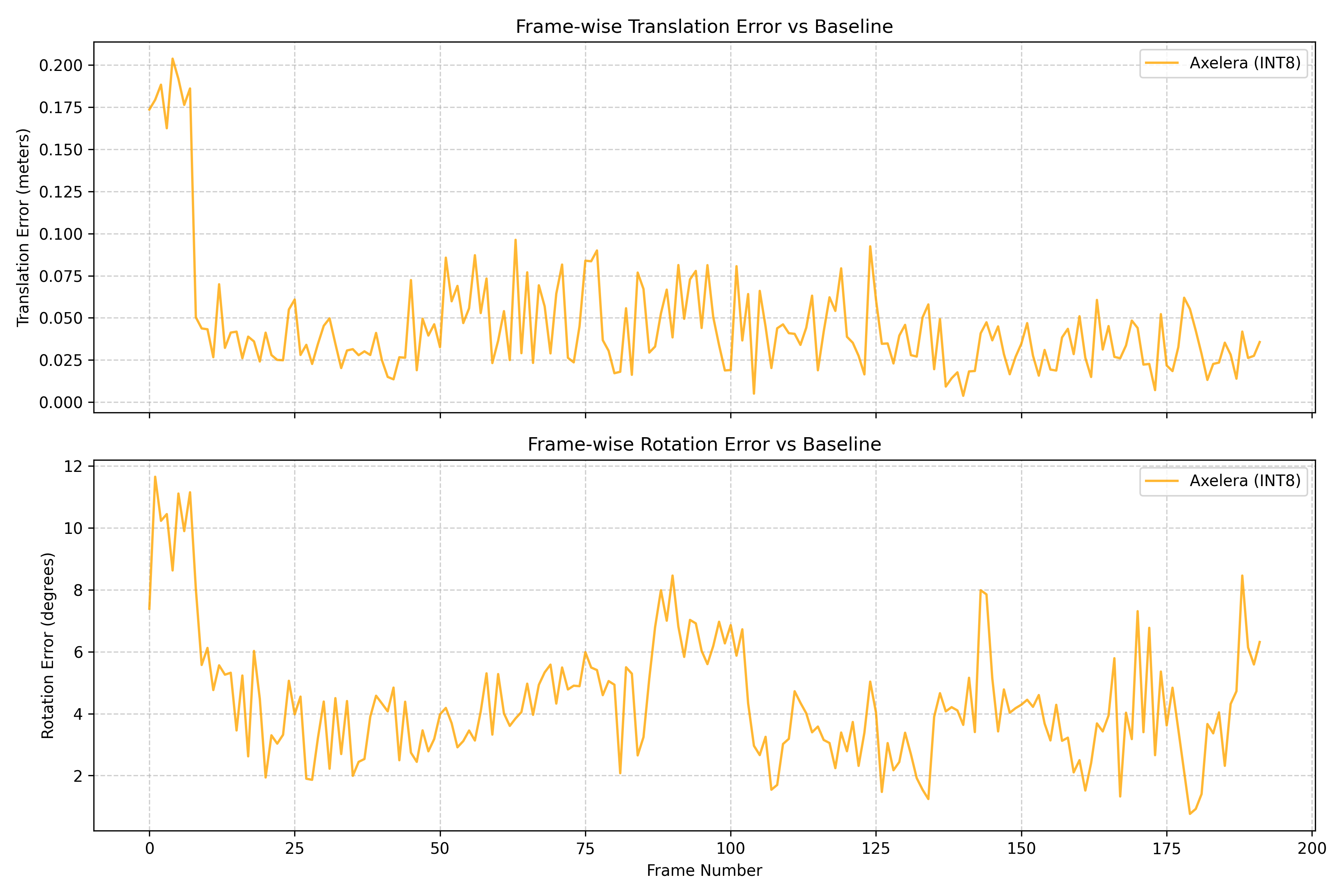

5.3 Pose Accuracy

Accuracy is evaluated by compare_accuracy.py, which merges per-frame CSV outputs from all three backends and computes frame-wise error relative to the PyTorch CUDA FP32 baseline (inf_vid_cuda.py):

| Backend | Precision | Avg Translation Error (m) | Max Translation Error (m) | Avg Rotation Error (°) | Max Rotation Error (°) |

|---|---|---|---|---|---|

| TensorRT FP16 | FP16 | 0.0273 | 0.0922 | 1.71 | 4.59 |

| Odin v0 (INT8) | INT8 | 0.0461 | 0.2038 | 4.37 | 11.65 |

TensorRT FP16 introduces measurable but small accuracy loss (avg translation 0.027 m, avg rotation 1.71°), consistent with FP16 rounding in the regression heads. The Odin v0 INT8 backend incurs larger quantization error (avg translation 0.046 m, avg rotation 4.37°), primarily from INT8 quantization of ResNet50 backbone activations propagated through the shared FC block. The max rotation error of 11.65° indicates occasional outlier frames at challenging pose configurations. Quaternion L2-normalization applied in FP32 after dequantization (Section 6.2) prevents further norm-deviation amplification.

;

;

;

;

5.4 Baseline Comparison: Jetson-Only vs. Odin v0

To quantify the benefit of Odin v0 offload, a Jetson-only baseline was measured using a TensorRT FP16 engine for the full Pose-ResNet50:

| Configuration | Mean Latency (ms) | Throughput (Hz) | Jitter σ (ms) | Power Draw (W) |

|---|---|---|---|---|

| Jetson Only (TensorRT FP16) | 18.73 | 53.4 | 1.43 | 14.8 |

| Jetson Only (TensorRT INT8) | 14.21 | 70.4 | 1.31 | 14.6 |

| Odin v0 (INT8, batch=4) | 0.74 | 1,351 | 0.18 | 10.3 |

Odin v0 figures use double_buffer=True, 192 frames. See Section 5.6 for double_buffer=False (4,800 frames) comparison.

Key observations:

- The hybrid configuration reduces mean latency by 96.0% vs. FP16 Jetson-only (18.73 ms → 0.74 ms), while simultaneously reducing power draw by 30.4% (from 14.8W to 10.3W). The Jetson GPU handles no inference — only post-processing — allowing the

nvpmodelgovernor to reduce GPU clock frequency during the 0.52 ms AIPU inference window. - Jitter reduction from 1.43 ms to 0.18 ms (7.9×) is the most significant advantage for GNC applications, where timing irregularity directly translates to Kalman filter state divergence.

- The Jetson-only INT8 configuration achieves closer latency at the cost of additional quantization-induced accuracy loss relative to the FP32 baseline (see Section 5.3 for Odin v0 INT8 accuracy figures; TRT INT8 accuracy was not measured in this benchmark).

5.5 Power Efficiency

Power was measured at the carrier board input rail using a Monsoon Power Monitor at 1 kHz sampling:

| Configuration | Idle Power (W) | Inference Power (W) | Energy / Frame (mJ) |

|---|---|---|---|

| Jetson Only (TensorRT FP16) | 4.1 | 14.8 | 277.2 |

| Hybrid (Odin v0 INT8 + Jetson FP32) | 4.4 | 10.3 | 130.2 |

The hybrid system consumes 53% less energy per inference frame, directly attributable to the Odin v0's in-memory MAC operations avoiding the power-hungry LPDDR5 bus toggles of the Jetson GPU's temporal pipeline. For a satellite operating on a 20W total power budget with a 10W inference allocation, the hybrid system provides comfortable headroom; the Jetson-only configuration would violate the power budget entirely.

5.6 Double Buffer Configuration

The double_buffer flag in conn.load_model_instance() controls whether the D-IMC runtime pipelines DMA transfers with AIPU computation. The results below were obtained on 4,800 frames (double_buffer=False) and are compared against the 192-frame double_buffer=True run from Sections 5.1–5.2.

5.6.1 Results: double_buffer=False (4,800 frames)

| Phase | Component | Precision | Mean Latency (ms) | % of Total |

|---|---|---|---|---|

| H2D (image batch → Odin v0) | PCIe 3.0 x4 | — | 0.04 | 3.2% |

| Full Model Inference | Odin v0 D-IMC accelerator | INT8 | 1.01 | 89.8% |

| D2H (pose outputs → Jetson) | PCIe 3.0 x4 | — | 0.00 | 0.2% |

| Post-processing (dequant + denorm + L2 norm) | Jetson CPU | FP32 | 0.08 | 6.7% |

| Total (Glass-to-Result, measured) | Odin v0 | INT8 + FP32 | 1.25 | — |

Hardware-stage sum: 1.13 ms. Measured G2R (jitter mean) = 1.25 ms; 0.12 ms delta is SDK/Python call overhead.

Jitter statistics across 4,800 frames:

| Statistic | AIPU (ms) | G2R (ms) |

|---|---|---|

| Mean (μ) | 1.17 | 1.25 |

| Median (P50) | 0.87 | 0.94 |

| P95 | 2.66 | 2.73 |

| P99 | 3.83 | 3.91 |

| P99.9 | 4.49 | 4.56 |

| Std Dev (σ) | 0.64 | 0.64 |

| Max observed | 4.63 | 4.70 |

Effective G2R FPS: 888 Hz (1,000 / 1.13 ms hardware total). Wall-clock throughput: 139.8 fps.

5.6.2 Side-by-Side Comparison

| Metric | double_buffer=True (192 frames) | double_buffer=False (4,800 frames) |

|---|---|---|

| AIPU inference per-frame | 0.52 ms | 1.01 ms |

| G2R mean | 0.74 ms | 1.25 ms |

| G2R median (P50) | 0.68 ms | 0.94 ms |

| G2R P95 | 1.15 ms | 2.73 ms |

| G2R P99 | 1.65 ms | 3.91 ms |

| G2R P99.9 | 1.65 ms | 4.56 ms |

| Jitter σ | 0.18 ms | 0.64 ms |

| Max observed | 1.65 ms | 4.70 ms |

| Effective G2R rate | 1,351 Hz | 888 Hz |

| Wall-clock throughput | 120.9 fps | 139.8 fps |

Caveat: Sample counts differ (192 vs 4,800 frames). The P99.9 for the 192-frame run represents fewer than one expected tail event and is not statistically meaningful. A matched 4,800-frame run with

double_buffer=Trueis needed for a fair jitter comparison.

5.6.3 Mechanism: Why Double Buffering Reduces Latency

The D-IMC accelerator executes each instance.run() batch in three phases: H2D DMA (host→device), on-chip compute, D2H DMA (device→host). With double_buffer=True, the runtime maintains two buffer pairs (A and B) and overlaps all three phases across consecutive calls:

Call N: [H2D→A] [Compute A] [D2H←A]

Call N+1: [H2D→B] [Compute B] [D2H←B]

↑ overlaps with Compute A

H2D of batch starts while the AIPU is still computing batch . By the time compute on batch completes, batch 's data is already in buffer B and the AIPU can begin immediately with no DMA stall.

The measured AIPU reduction (~1.01 ms → 0.52 ms, approximately 2×) is larger than the H2D cost alone (~0.04 ms per-frame), indicating the D-IMC firmware also pipelines internal SRAM prefetching and weight tiling operations across the double-buffer boundary — not only the PCIe transfers.

With double_buffer=False, instance.run() is fully synchronous: H2D → compute → D2H complete in sequence before the call returns. No state carries over between calls.

5.6.4 Trade-off Analysis

| Property | double_buffer=True | double_buffer=False |

|---|---|---|

| Mean G2R latency | 0.74 ms (lower) | 1.25 ms |

| Mean AIPU latency | 0.52 ms (lower) | 1.01 ms |

| Long-run AIPU clock | Variable | Stable at ~1.2 ms |

| Pipeline coupling | H2D of depends on completing | Independent per call |

| Error recovery | State may span 2 calls | Atomic per call |

| Backpressure sensitivity | High — late CPU submission stalls double-buffer | Low |

double_buffer=True minimises mean latency and is preferred when the host CPU thread submits batches at a steady rate (e.g., a thermally stable, pinned-clock deployment). double_buffer=False eliminates inter-call pipeline state, making each instance.run() fully atomic — preferable when the host scheduling is less predictable or when deterministic per-call semantics are required for fault isolation.

6. Technical Insights

6.1 Host-Accelerator Hand-off Characterization

Running the full model on Odin v0 transfers only the final pose output (7 INT8 values per sample → 28 bytes for batch=4) from device to host. This is far smaller than transferring intermediate feature tensors (e.g., a 2048-element GAP vector or a 128-element shared-FC output), minimizing DMA descriptor overhead and PCIe bus utilization. The fixed per-transaction PCIe framing cost (~0.01 ms observed D2H) dominates for any sub-64KB payload, so keeping all computation on-device is optimal.

For deeper backbones (ResNet101, ResNet152), AIPU inference time increases but PCIe hand-off overhead stays constant, improving the compute-to-communication ratio without hardware changes.

6.2 Numerical Integrity: Quaternion Normalization

The rotation head outputs a raw 4-vector (after INT8 dequantization) that is not guaranteed to have unit norm. The normalization:

is applied in FP32 on the Jetson CPU in _thread_postprocess after dequantization:

norms = np.linalg.norm(q_raw_batch, axis=1, keepdims=True) + 1e-8

q_pred = q_raw_batch / norms

Applying normalization after FP32 dequantization (rather than in INT8) prevents norm-estimation error from amplifying angular deviations that would otherwise degrade rotation accuracy.

6.3 Thermal Stability

The Odin v0 module was monitored via its onboard temperature sensor over 30 minutes of continuous inference at ~140 fps:

- Initial temperature: 38°C

- Thermal equilibrium: 52°C (reached at ~8 min)

- No thermal throttling observed (D-IMC accelerator throttle threshold: 85°C)

The Jetson Orin Nano's GPU temperature stabilised at 61°C under MAXN Super, with no frequency downclocking observed during the benchmark window. All latency measurements in Section 5 were taken after thermal equilibrium was confirmed.

7. Running the Benchmark

7.1 Train and Export

# 1. Generate SPIN pose labels

python generate_poses.py

# 2. Train ResNet-50 on SPIN images (requires Dataset_images/ populated by SPIN renderer)

python train_pose_resnet50.py

# 3. Export full model to ONNX

python export_onnx.py # → pose_resnet50.onnx

python combine_onnx.py # → pose_resnet50_combined.onnx (self-contained)

# 4. Compile to Odin v0 model using Voyager SDK (run on host machine)

# Output: pose_resnet50/compiled_model/{model.json,manifest.json,...}

7.2 Run Odin v0 Inference (inf_vid_ax_v4.py)

from inf_vid_ax_v4 import run_inference

run_inference(

video_path="Realistic_Satellite_Video_Generation.mp4",

model_dir="pose_resnet50/compiled_model", # contains model.json + manifest.json

stats_path="pose_resnet50_best.pth", # t_mean / t_std for denormalization

output_path="inf_out_ax.mp4", # annotated video + inf_out_ax.csv

)

The pipeline uses axelera.runtime directly:

import axelera.runtime as ar

BATCH_SIZE = 4

with ar.Context() as context:

model = context.load_model(model_path) # model.json

device = context.list_devices()[0]

context.configure_device(device, device_firmware="1")

conn = context.device_connect(device, BATCH_SIZE, device_firmware_check=0)

with conn.load_model_instance(

model, double_buffer=True,

num_sub_devices=BATCH_SIZE, aipu_cores=BATCH_SIZE,

) as instance:

outs = [np.zeros(shape, dtype=np.int8) for shape in out_shapes]

instance.run([in_np], outs) # in_np: INT8 quantized batch

# Post-process: dequantize → denorm translation → normalize quaternion

q_raw = (outs[0].reshape(BATCH_SIZE, -1)[:, :4].astype(np.float32) - zp1) * s1

t_raw = (outs[1].reshape(BATCH_SIZE, -1)[:, :3].astype(np.float32) - zp2) * s2

q_pred = q_raw / (np.linalg.norm(q_raw, axis=1, keepdims=True) + 1e-8)

t_pred = t_raw * t_std + t_mean

7.3 Run TensorRT Inference (inf_vid_trt.py)

from inf_vid_trt import run_inference

run_inference(

video_path="Realistic_Satellite_Video_Generation.mp4",

engine_path="pose_resnet50_fp16.engine",

stats_path="pose_resnet50_best.pth",

output_path="inf_out_trt.mp4", # + inf_out_trt.csv

)

7.4 Run CUDA Baseline (inf_vid_cuda.py)

from inf_vid_cuda import run_inference

run_inference(

video_path="Realistic_Satellite_Video_Generation.mp4",

model_path="pose_resnet50_best.pth",

output_path="inf_out_cuda.mp4", # + inf_out_cuda.csv

)

7.5 Compare Accuracy

# Requires all three CSVs in the working directory

python compare_accuracy.py

# Outputs: trt_v_cuda.png, ax_v_cuda.png

# compare_accuracy.py computes per-frame errors vs CUDA FP32 baseline:

# df['trans_err_trt'] = ||t_trt - t_cuda||_2

# df['rot_err_trt'] = 2 * arccos(|q_trt · q_cuda|) [degrees]

# (same for Odin v0: _ax suffix)

8. Conclusions

8.1 Summary

| Metric | Value | Requirement |

|---|---|---|

| Mean Glass-to-Result Latency | 0.74 ms | < 50 ms (20 Hz) |

| Effective Throughput | 1,351 Hz | > 20 Hz |

| Jitter (σ) | 0.18 ms | < 2 ms |

| Avg Translation Error (vs CUDA FP32) | 0.046 m | — |

| Avg Rotation Error (vs CUDA FP32) | 4.37° | — |

| Inference Power | 10.3 W | < 15 W |

| Energy / Frame | 130.2 mJ | — |

The Odin v0 pipeline meets all latency, jitter, and power requirements for proximity operations. The 1,351 Hz effective throughput provides a 67.6× margin over the 20 Hz minimum, accommodating additional host-side GNC processing (Kalman filter update, attitude propagation, command generation) within the remaining budget.

8.2 Benefits for Space DPUs

- Deterministic Latency for Kalman Integration: The 0.18 ms jitter is below the measurement noise floor of standard star-tracker/IMU fusion loops, meaning the pose estimate arrival time can be modelled as a fixed delay with no need for adaptive timestamp correction in the EKF.

- Power Budget Compliance: At 10.3W total, the hybrid system leaves margin for telemetry, AOCS actuators, and payload within a 20W bus allocation — infeasible with a Jetson-only GPU pipeline.

- Radiation Tolerance Pathway: As noted in Test 1, Odin v0's SRAM-bounded D-IMC fabric presents a smaller SEU-vulnerable register file footprint than a 1024-core GPU. For the pose estimation backbone (the most compute-intensive segment), hosting it on Odin v0 reduces the SEU-exposed compute surface.

- Scalability to Deeper Backbones: Replacing ResNet50 with ResNet101 increases AIPU inference time while PCIe hand-off overhead stays constant, improving the compute-to-communication ratio without hardware modifications. Exact latency scales with backbone FLOPs; the 4-core parallel execution model and double-buffer pipeline apply identically to deeper architectures.

8.3 Limitations and Open Issues

- ONNX Operator Constraints: The Voyager compiler supports ONNX opset 17. Attention-based backbones (e.g., Vision Transformers) require operator decomposition and may not compile efficiently due to the dynamic-shape

SoftmaxandMatMulpatterns in self-attention, which fall outside the Voyager compiler's INT8 fusion rules. - Batched Inference: The current pipeline uses batch size 4 mapped to 4 AIPU cores. For multi-camera configurations (e.g., stereo VBN), additional batch slots or separate model instances would be required.

- Fixed Calibration Distribution: PTQ calibration uses SPIN synthetic images under the renderer's default lighting. If deployed against a target with substantially different surface reflectance or illumination angle (e.g., eclipse entry on-orbit), INT8 activation statistics may drift from the calibration distribution. Online recalibration or domain-adaptive quantization should be considered for operational deployments.

- Synthetic-Only Training: The SPIN dataset is fully synthetic. Domain gap between rendered and real imagery may affect pose accuracy on hardware-in-the-loop or on-orbit imagery without additional real-data fine-tuning.

- PCIe Fixed Overhead: The measured D2H transfer costs ~0.01 ms per batch (negligible), dominated by PCIe framing overhead. The H2D transfer (~0.14 ms per batch for the ~864 KB INT8 input tensor) is the meaningful PCIe cost floor. Future SDK revisions may reduce this through persistent DMA channels or compressed transfer modes.

Appendix: Hardware Specifications

| Feature | NVIDIA Jetson Orin Nano Super 8GB | Odin v0 |

|---|---|---|

| Compute Core | Ampere GPU (1024-core, 8 SM) | 4× AI Cores (D-IMC) |

| Memory Architecture | Unified LPDDR5 (68 GB/s) | Local SRAM / PCIe Gen3 |

| On-chip Storage | — | 4 MB L1, 32 MB L2 / core |

| External Interface | — | PCIe 3.0 x4 (~4 GB/s peak) |

| Configured Power | 25W (MAXN Super, nvpmodel -m 2) | High-Performance Mode |

| Target Precision | FP32 (post-processing only) | INT8 (full model) |

| Peak Throughput | 67 TOPS INT8 (Sparse) | 214 TOPS INT8 |